Artificial intelligence (AI) is rapidly transforming the medical field, revolutionizing how hospitals diagnose illnesses, manage patient care, and allocate resources. Machine learning algorithms can analyze vast amounts of medical data, identify patterns, and assist doctors in making faster and more accurate diagnoses. AI-powered systems are now being used to predict patient deterioration, recommend treatment plans, and even perform robotic surgeries. These advancements have the potential to enhance healthcare efficiency, reduce human error, and improve patient outcomes, making AI an invaluable tool in modern medicine.

However, this integration raises significant ethical concerns, particularly regarding the use of AI to predict patient survival rates and its role in clinical decision-making. Hospitals are now using AI to predict patient survival rates, giving it the authority to determine patient treatment priorities. The central question remains: Should AI ever decide who gets treated first?

The Rise of AI in Healthcare

Artificial intelligence (AI) has been increasingly integrated into hospital emergency departments to assist with patient triage, aiming to enhance the accuracy and efficiency of assessments. One notable example is Johns Hopkins Hospital in Baltimore, Maryland, where researchers developed an AI tool designed to support emergency department nurses in evaluating incoming patients. This algorithm rapidly predicts the risk of several outcomes and recommends a triage level of care, providing explanations for its decisions.

In addition to triage, researchers with Johns Hopkins Medicine and computer scientists from Johns Hopkins University’s Whiting School of Engineering are leveraging AI to enhance early disease detection. They are analyzing thousands of abdominal scans in an effort to catch pancreatic tumors while they are still operable. Furthermore, another computer scientist developed an AI algorithm used at Johns Hopkins hospitals to help diagnose sepsis before it becomes fatal.

Similarly, in China, a 2024 publication by ScienceDirect states that certain hospitals have implemented AI triage systems intended to operate independently, substituting human triage nurses to determine the severity and priority of a patient’s condition. These AI systems aim to improve triage efficiency, reduce the burden on healthcare resources, and mitigate cross-infection risks during pandemics. However, the implementation of such systems raises considerations regarding their role in patient care and the importance of maintaining human oversight.

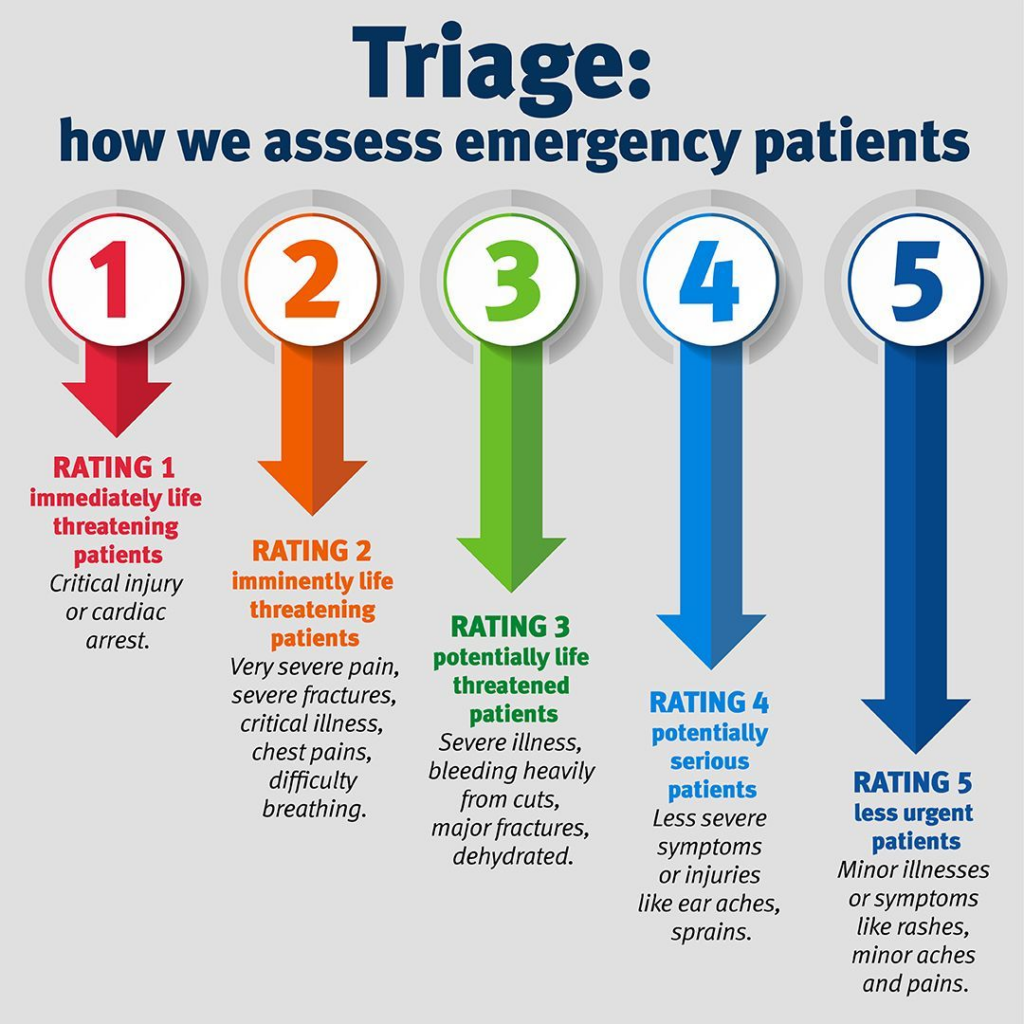

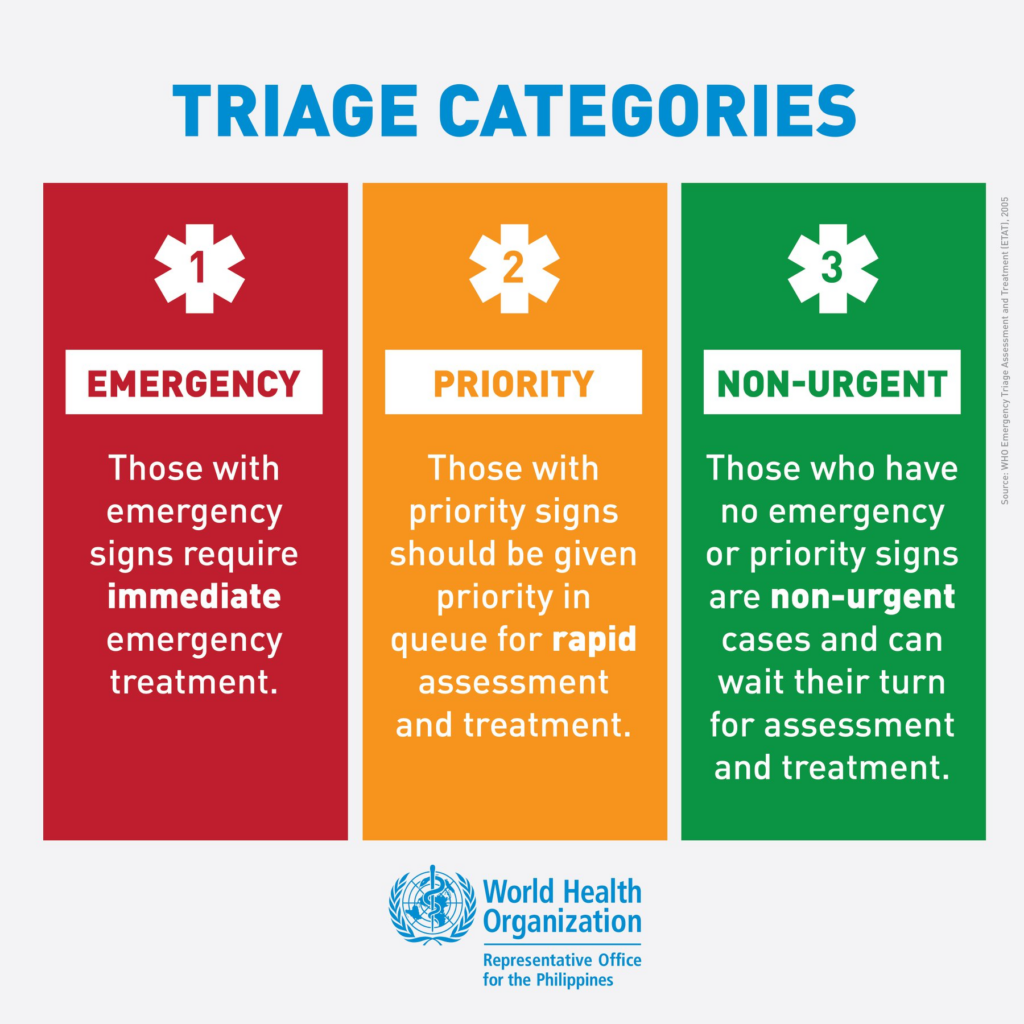

The integration of artificial intelligence (AI) and machine learning (ML) in healthcare has become a major point of interest and raises the question of its impact on the emergency department (ED) triaging process. One of the most controversial applications of AI in healthcare is its role in triage, determining which patients receive immediate treatment, particularly in emergency rooms and intensive care units. AI-driven triage systems use predictive analytics to assess patient survival rates and prioritize care accordingly. While this can optimize resource allocation, it raises profound ethical concerns. Should AI, rather than human doctors, decide who gets treated first? Could algorithmic bias result in unfair healthcare decisions?

A scoping review published in the Journal of Medical Internet Research examined the use of AI in hospital emergency department triage. The study found that machine learning models consistently demonstrated superior discrimination abilities compared to conventional triage systems, leading to significant enhancements in predictive accuracy, disease identification, and risk assessment. However, the study emphasized that AI should augment, not replace, the clinical judgment of healthcare professionals.

How AI is Used in Medical Decision-Making

Hospitals are increasingly using AI for predictive analytics to anticipate patient outcomes and optimize healthcare delivery. AI-powered models analyze vast datasets, including patient history, lab results, and real-time vitals, to identify individuals at risk of complications such as sepsis, cardiac arrest, or organ failure. For instance, AI systems like Epic’s Sepsis Model are deployed in hospitals to flag potential cases of sepsis before symptoms become critical, enabling timely intervention. By leveraging predictive analytics, hospitals aim to reduce mortality rates, prevent readmissions, and allocate medical resources more efficiently.

In emergency rooms, AI-driven triage systems assist healthcare professionals in prioritizing patients based on urgency. Hospitals use AI to analyze symptoms, vitals, and medical history, helping categorize patients into different risk levels. For example, AI-powered triage tools like the eTriage system in the UK or the AI-driven SmartTriage system in the U.S. guide emergency staff in determining who needs immediate care. While these systems enhance efficiency and reduce wait times, concerns persist about their accuracy and the necessity of human oversight to prevent misclassification of critical cases.v

Machine learning algorithms have revolutionized disease diagnosis and the prediction of patient deterioration. AI-powered imaging tools can detect anomalies in X-rays, MRIs, and CT scans with precision, aiding in the early detection of cancers, stroke, and neurological disorders. Hospitals like Mayo Clinic and Johns Hopkins use AI to analyze pancreatic cancer scans and predict the likelihood of tumor progression. The Mayo Clinic has developed an AI model capable of detecting pancreatic cancer in CT scans approximately 475 days before clinical diagnosis, with an accuracy of 92%. This innovation represents a substantial leap in early cancer detection.

Additionally, deep learning models assess ICU patients’ vitals, alerting doctors to potential deteriorations before visible symptoms appear. These advancements improve diagnostic accuracy and patient outcomes but also raise ethical concerns regarding AI’s role in life-and-death decisions.

Can AI Be Trusted with Life-and-Death Decisions?

The use of AI in prioritizing patients raises serious ethical concerns, particularly regarding transparency, fairness, and the value of human life. While AI is designed to enhance efficiency and improve outcomes, its role in deciding who receives care first is fraught with moral dilemmas. Should a machine determine which patient gets a ventilator in an ICU crisis? Should an algorithm decide who qualifies for a life-saving surgery? These questions highlight the need for strict human oversight, as healthcare decisions involve more than just data, they require empathy, context, and ethical reasoning. Without proper safeguards, AI-driven triage could lead to impersonal and morally questionable choices.

Bias in AI models further complicates the issue. AI algorithms are trained on historical patient data, which may reflect existing racial, economic, or gender disparities in healthcare. Studies have shown that some AI systems underdiagnose diseases in minority populations or prioritize wealthier patients with more medical history available in digital records. For example, In 2019, a study published in Science revealed that an algorithm widely used in U.S. hospitals to guide healthcare decisions exhibited racial bias, systematically favoring white patients over Black patients in allocating high-risk care management resources. The algorithm’s design, which relied on healthcare spending as a proxy for health needs, inadvertently led to underestimating the severity of illness in Black patients, resulting in disparities in care recommendations. Without addressing these biases, AI could unintentionally worsen inequality rather than improve care for all.

Another critical challenge is the “black box” nature of AI, which makes it difficult for healthcare professionals to understand how AI reaches its conclusions. The “black box” nature of AI refers to AI systems, particularly those using complex algorithms like deep learning, where the internal workings and decision-making processes are opaque and difficult for humans to understand, even with knowledge of inputs and outputs.

Unlike traditional medical decision-making, where doctors can explain their reasoning, many AI algorithms operate in ways that are not easily interpretable. This lack of transparency can erode trust, making clinicians hesitant to rely on AI-driven recommendations. AI can assist in medical decision-making, but it cannot replace human judgment and empathy – two fundamental elements that define ethical and compassionate healthcare.

Real-World Consequences – Case Studies and Controversies

Successes of AI in Healthcare

Artificial intelligence (AI) has led to significant advancements in healthcare, notably in diagnostics and patient management. For example, an NHS hospital in Chelsea and Westminster implemented an AI system capable of providing instant skin cancer diagnoses. Using a simple iPhone with a magnifying lens, clinicians capture images of suspicious moles, which the AI app analyzes within seconds. This system has achieved a 99.9% accuracy rate in ruling out melanoma, expediting patient care and reducing waiting times. The success of this AI tool has prompted its adoption across 20 hospitals within the NHS, collectively detecting approximately 13,000 cancer cases to date.

Failures and Bias in AI Systems

Despite these successes, AI systems have also encountered significant challenges, particularly concerning algorithmic bias. A notable case involves an AI algorithm used in U.S. hospitals that exhibited racial bias by systematically favoring white patients over Black patients in allocating high-risk care management resources. Other notable cases include:

1. Dignity Health – Henderson, Nevada: In the emergency department, an AI system flagged a patient for sepsis, recommending immediate administration of IV fluids. However, upon further examination, the attending nurse determined that the patient did not require such treatment, highlighting concerns about AI’s accuracy in clinical decision-making.

2. NHS Hospitals – England: A study involving medical practitioners from various NHS hospitals revealed mixed perceptions about AI’s role in emergency triage. While some viewed AI systems like DAISY as helpful and accurate, others emphasized the importance of empathy and non-verbal cues in patient interactions, which AI lacks. This underscores the ethical and practical challenges of integrating AI into clinical workflows.

3. U.S. Hospitals Using AI for In-Hospital Mortality Prediction: A study in March 2025 by Axios Publication found that AI models designed to predict in-hospital mortality recognized only an average of 34% of patient injuries, raising concerns about their reliability in critical care settings.

Finding a Balance

While I believe AI has the potential to enhance healthcare efficiency, it should serve as an aid rather than an autonomous decision-maker in medical ethics. AI can analyze vast datasets, detect patterns, and provide critical insights, but the final judgment must remain with human professionals who possess empathy, contextual understanding, and ethical reasoning. Physicians and healthcare providers should use AI as a tool to support clinical decisions rather than relying on it to dictate life-and-death choices. This balance ensures that technology complements, rather than replaces, the human element in medicine.

To maintain ethical AI use in healthcare, several safeguards must be in place. Human oversight is essential to review AI-generated recommendations and mitigate errors or biases. Ethical AI design should prioritize transparency, ensuring that healthcare professionals understand how AI reaches its conclusions. Additionally, clear accountability mechanisms must be established so that responsibility for AI-driven decisions is traceable. Governments and healthcare regulators should implement policies that mandate fairness audits, bias mitigation strategies, and continuous monitoring of AI systems to prevent discrimination and uphold patient trust.

The Future of AI in Healthcare

AI is transforming healthcare, offering faster diagnoses, predictive analytics, and improved patient management. But alongside these breakthroughs come pressing concerns, bias in algorithms, ethical dilemmas in triage, and the risk of over-reliance on machines for life-and-death decisions. If AI can detect diseases earlier than a doctor and optimize treatment plans, should we fully trust it? Or does the absence of human intuition make it a dangerous gamble when the stakes are highest?

The future of AI in healthcare isn’t about choosing between man and machine, it’s about forging a partnership where technology amplifies human expertise rather than replacing it. We need safeguards, transparency, and oversight to ensure AI remains a tool for good. But here’s the real question: As AI advances, will we grow too comfortable letting algorithms make the hardest decisions? Or should human hands always remain on the wheel, no matter how intelligent the machine becomes?

- The Rise of Suicide Posts in Digital Spaces Reflects a Growing Mental Health Crisis - February 22, 2026

- How Kenya’s Silent Lifestyle Disease Crisis Is Reshaping Public Health - February 12, 2026

- The Rise of Lifestyle Diseases Emerges as a Crisis Among Kenya’s Youth - February 9, 2026